-

Full lifecycle coverage

Get deep insights into behavior data, feedback data, model training and application launch across the entire AI learning cycle to optimize computing, network, storage, resource scheduling and others.

-

Hardcore AI technology

Self-developed infrastructure technologies enable the AI-oriented computing, storage, and communication; high-performance AI computing architecture supports trillion-dimensional feature processing.

-

Lower computing cost

Reduce the total cost of ownership; 4Paradigm SageOne costs just 1/10 of Google AutoML to handle image classification.

Advantages

Highlights

-

High-performance storage tuning

4Paradigm Sage software customized for improved feature processing, data-to-disk, etc.

Components upgraded and optimized based on the standard 4Paradigm Sage software.

-

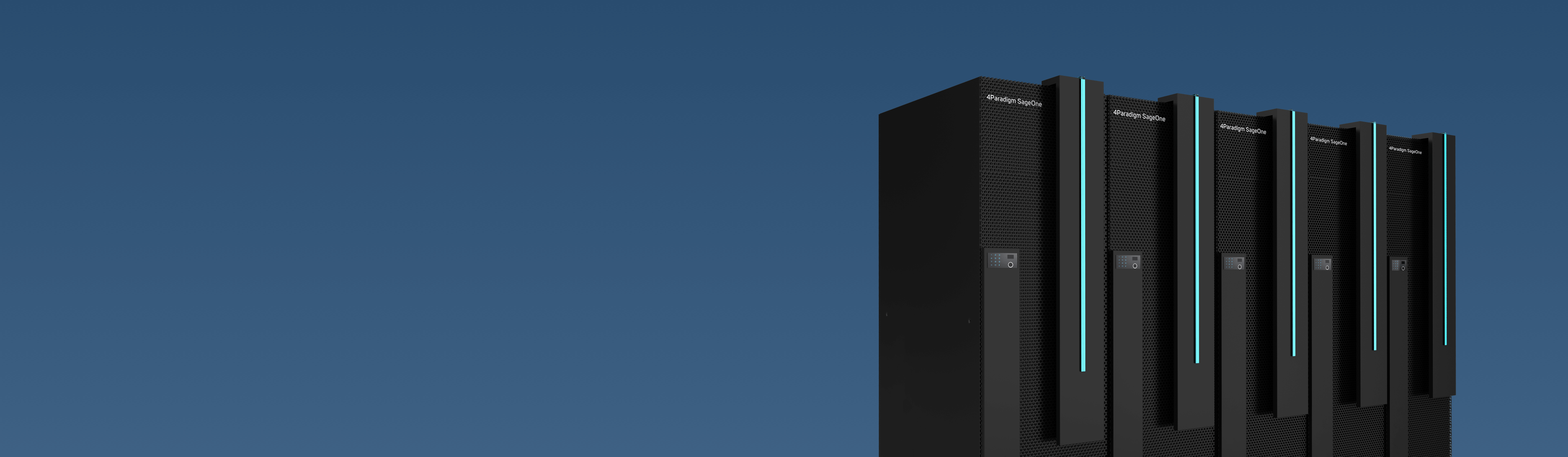

All-in-one delivery

Provide full-process delivery services from server, network equipment, to software deployment and functional test. Deliver a one-stop solution for hardware preparation, network configuration, resource planning, component compatibility and software deployment to accelerate the overall project cycle.

-

Horizontal scalability

Allow for horizontal linear scaling to expand or contract cluster size flexibly as needed.

-

Cluster-based management and operation

Enable centralized and unified management of all-in-one clusters to allow real-time global monitoring and operation via a single interface.

Models and Features

-

Machine Learning Training Engine(Advanced Edition) AR5200

High-dimensional machine learning framework

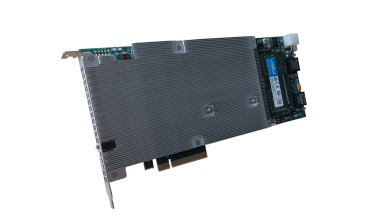

4Paradigm AI accelerator card

Industry-leading machine learning performance

-

Machine Learning Inference Engine (Advanced Edition) AR5200

Feature calculation engine

Estimation service engine

Rapid AI Inference

-

Feature Storage Engine (Advanced Edition) AR5400

Unified data governance

In-memory time series database RTIDB

Ultra-low latency online data services

-

Super Machine Learning Training Engine AD8200

Ultra-high performance processor

Improved performance for more efficient ultra-high dimensional AI model training

-

Deep Learning Training Engine (Advanced Edition) AR4400

Enterprise-level training accelerator card

Embedded deep learning algorithm libraries

Support multiple tuning modes such as Auto and Expert

Visualized full process model training that supports manual intervention

-

4Paradigm AI Accelerator Card ATX900

Large memory size

High performance, with higher ML training performance

Business Scenarios

-

Precision marketing

-

Sales forecast

-

Anti-fraud and risk management

-

Anti-money laundering